Apple is building one AI model that can view, create and edit images

Following on from a previous model called UniGen, Apple’s team of researchers is showing the UniGen 1.5 case, a system that can handle image understanding, generation and editing within a single model. Here are the details.

Follows up on the original UniGen

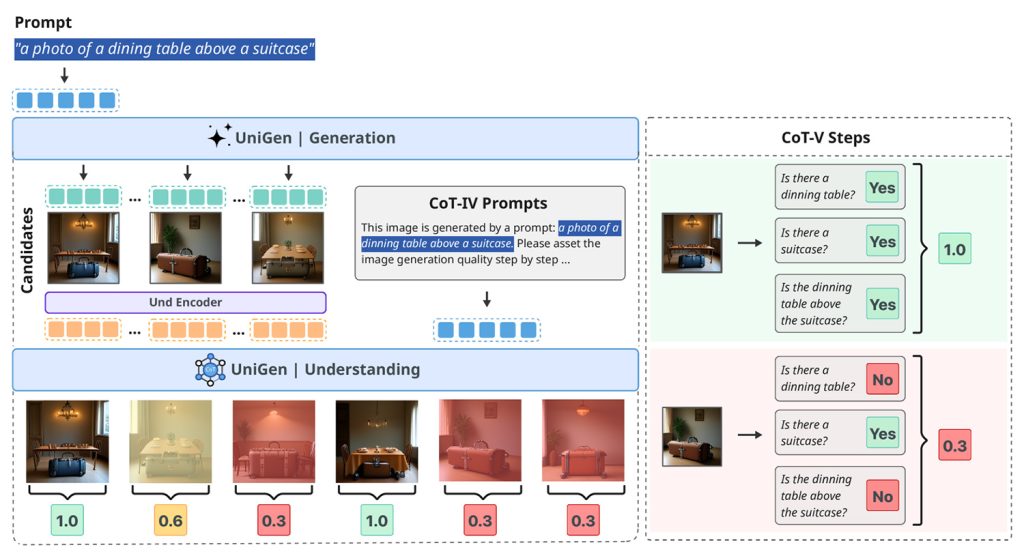

Last May, a team of Apple researchers published a study called UniGen: Enhanced Training & Test-Time Strategies for Unified Multimodal Understanding and Generation.

In this work, they presented a unified multimodal large language model capable of both image understanding and image generation within a single system, rather than relying on separate models for each task.

Apple has now published a follow-up to this study in a paper titled UniGen-1.5: Enhancing Image Generation and Editing through Reward Unification in Reinforcement Learning.

UniGen-1.5, explained

This new research extends UniGen by adding image editing capabilities to the model, still within a single unified framework, rather than splitting the understanding, generation, and editing across different systems.

Unifying these capabilities in a single system is challenging because understanding and generating images requires different approaches. However, the researchers say that the unified model can use its understanding ability to improve generation performance.

According to them, one of the main challenges in image editing is that models often struggle to fully understand complex editing instructions, especially when the changes are subtle or highly specific.

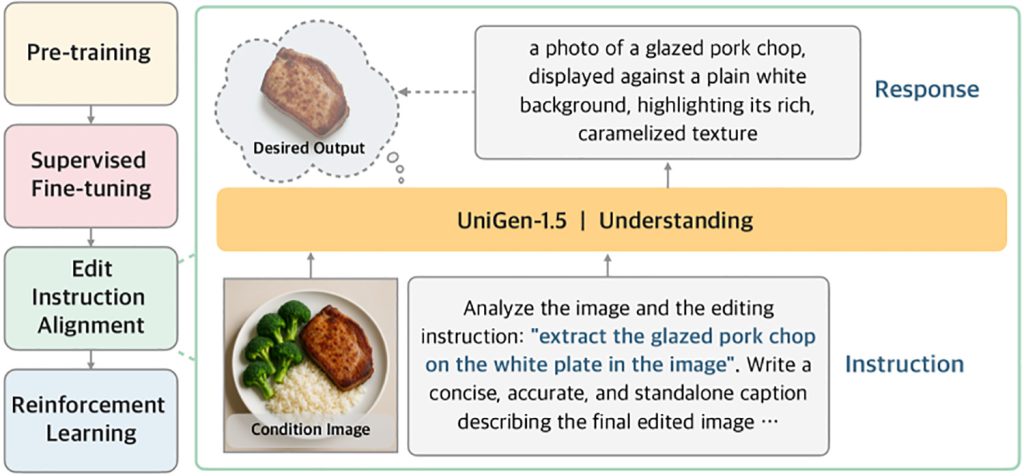

To solve this problem, UniGen-1.5 introduces a new post-training step called Edit Instruction Alignment:

“Furthermore, we observed that the model remains inadequate in handling various editing scenarios after supervised fine-tuning due to its lack of understanding of the editing instructions. Therefore, we propose Edit Instruction Alignment as a lightweight Post-SFT phase to improve the alignment between the editing instruction and target image semantics. Specifically, it takes the state image and the instruction as inputs to predict the target image using the textual description and is optimized for the content of the experiment. that this phase is very beneficial for increasing cutting performance.”

In other words, before asking the model to improve its outputs using reinforcement learning (which trains the model by rewarding better outputs and penalizing worse ones), researchers first train it to derive a detailed textual description of what the edited image should contain based on the original image and editing instructions.

This intermediate step helps the model better internalize the intended adjustments before generating the final image.

The researchers then use reinforcement learning in a way that is probably the most important contribution of the paper: they use the same reward system for both generating and editing images, which was previously challenging because the edits can range from minor tweaks to full transformations.

As a result, when tested on several standard benchmarks that measure how well models follow instructions, maintain visual quality, and handle complex editing, UniGen-1.5 either matches or outperforms several state-of-the-art open and proprietary multimodal large language models:

Thanks to the above efforts, UniGen-1.5 provides a stronger foundation for the advancement of unified MLLM research and produces competitive performance across benchmarks for image understanding, generation, and image modification. Experimental results show that UniGen-1.5 scores 0.89 and 86.83 on GenEval and DPG-Bench, respectively, significantly outperforming current methods such as BAGEL and BLIP3o. In terms of image editing, UniGen-1.5 achieves an overall score of 4.31 on ImgEdit, surpassing recent open-source models such as OminiGen2 and comparable to proprietary models such as GPT-Image-1.

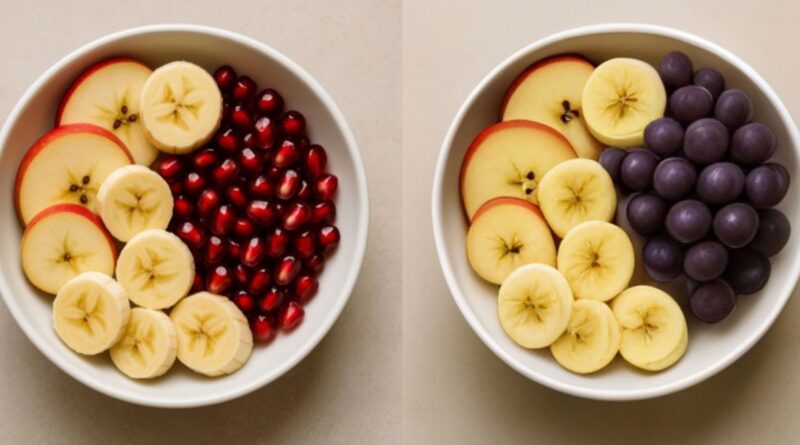

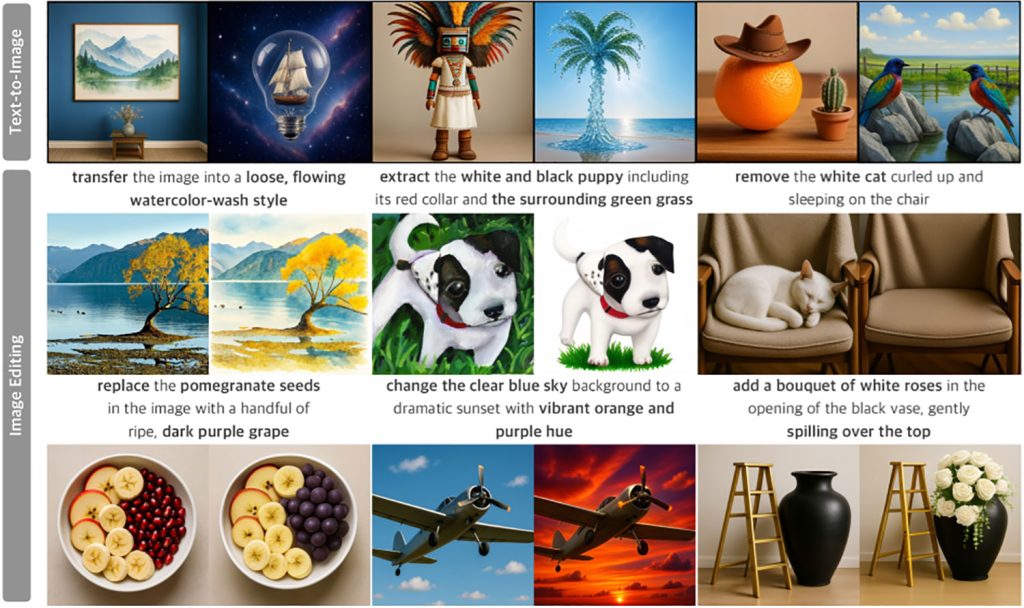

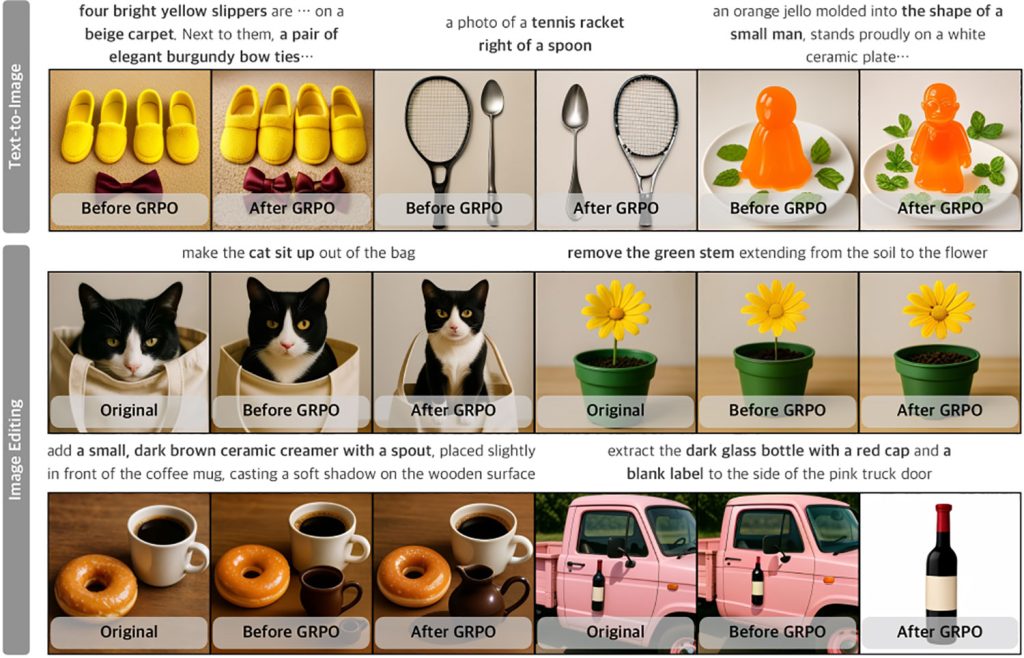

Here are some examples of text-to-image generation and image editing options of UniGen-1.5 (unfortunately, the researchers seem to have accidentally cropped the prompts for the Text-to-Image segment in the first image):

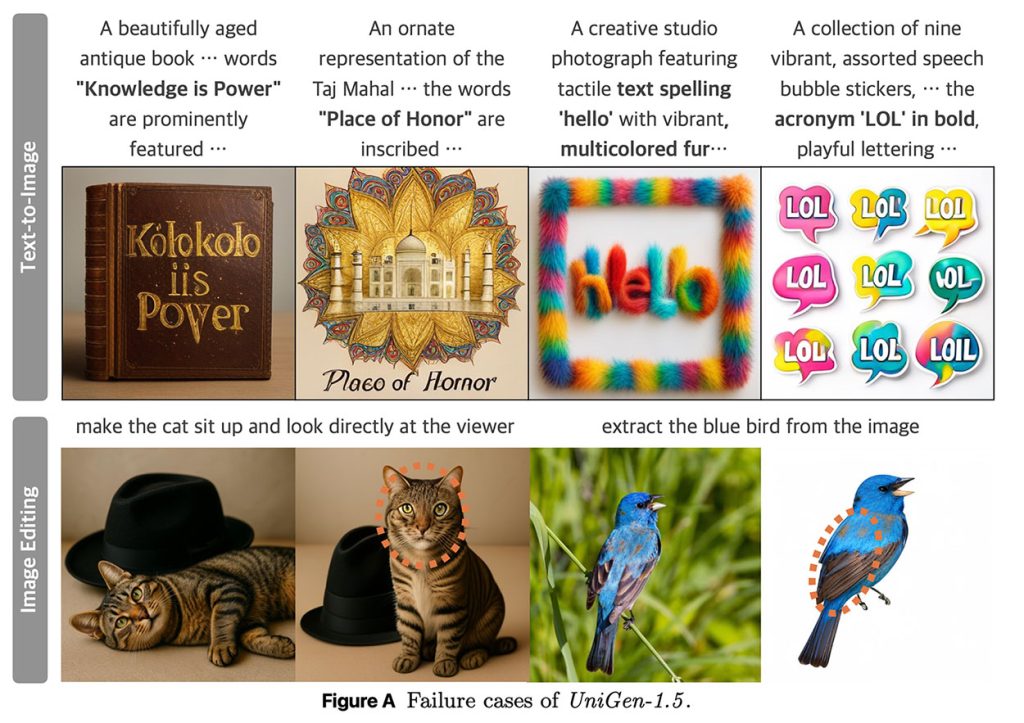

The researchers note that UniGen-1.5 struggles with text generation as well as identity consistency under certain circumstances:

Cases where UniGen-1.5 fails in both text-to-image generation and image manipulation tasks are shown in Figure A. In the first row, we present cases where UniGen-1.5 fails to render text characters accurately because the lightweight discrete detokenizer tries to control the fine-grained structural details needed for text generation. In the second row, we show two examples with visible shifts in identity highlighted by a circle, e.g., changes in the texture and shape of a cat’s facial fur and differences in the color of a bird’s feathers. UniGen-1.5 needs further improvements to deal with these limitations.

You can find the full study here.

Accessories offer on Amazon

FTC: We use automatic affiliate links with income. More.